Autonomous Driving Technologies

Nowadays indoor robots have been widely used across many aspects of our daily lives, from fetching products in Amazon warehouses, delivering food and beverage in malls and restaurants, to vacuum and cleaning in our own homes. However, ubiquitous deployment of indoor autonomous robots can only be enabled with the availability of affordable, reliable, and scalable indoor maps. LiDAR sensors, though very popular in indoor mapping, cannot be used to generate precise indoor maps due to their inability to handle reflective objects, such as glass doors and French windows. Similarly, sonar sensors have been used to construct indoor maps as well. But sonar-based maps suffer from inaccuracy caused by sonar cross-talk, corner effects, large noise, etc. Nonetheless, sonar sensors can handle glass and reflective objects quite well, which perfectly complements LiDAR sensors. Sensor-fusion based SLAM algorithm development is our current research focus. We are also researching RGB-D camera based SLAM algorithms as well.

Related Publications

Authorea

GaRField++: Reinforced Gaussian Radiance Fields for Large-Scale Robots View Synthesis (Authorea)

Zhiliu Yang, Hanyue Zhang, Xinhe Zuo, Yuxin Tong, Ying Long, and Chen Liu

Authorea Pre-print, February 4, 2025

DOI: 10.22541/au.173866895.50173418/v1

Abstract: This paper proposes a novel framework for large-scale scene reconstruction based on 3D Gaussian splatting (3DGS) and aims to address the rendering deficiency and scalability challenges faced by existing embodied AI tasks. For tackling the scalability issue, we split the large scene into multiple cells, and the candidate point-cloud and camera views of each cell are correlated through a visibility-based camera selection and a progressive point-cloud extension. To reinforce the rendering quality, three highlighted improvements are made in comparison with vanilla 3DGS, which are a strategy of the ray-Gaussian intersection and the novel Gaussians density control for learning efficiency, an appearance decoupling module based on ConvKAN network to solve uneven lighting conditions in large-scale scenes, and a refined final loss with the color loss, the depth distortion loss, and the normal consistency loss. Finally, the seamless stitching procedure is executed to merge the individual Gaussian radiance field for novel view synthesis across different cells. Evaluation of Mill19, Urban3D, and MatrixCity datasets shows that our method consistently generates more high-fidelity rendering results than state-of-the-art methods of large-scale scene reconstruction. We further validate the generalizability of the proposed approach by rendering on self-collected video clips recorded by a commercial drone.

NetMag

Unified Perception and Collaborative Mapping for Connected and Autonomous Vehicles (ieee)

Zhiliu Yang and Chen Liu

IEEE Network Magazine, 37(4), pp. 273-281, July/August 2023

DOI: 10.1109/MNET.017.2300121

Abstract: Advanced autonomous driving requires a holistic scene understanding and spatial-temporal evolving maps. Contrast to the common approach of problem decomposition, we advocate the philosophy of unification for scene perception and mapping toward modern autonomous vehicle systems. In this article, we first propose a unified framework to integrate multiple perception tasks into a single end-to-end deep neural network (DNN) architecture. The unified framework can generate temporally consistent perception by leveraging spatial affinity and temporal correlation of different frames. Then, our insight on temporal volumetric integration of 4D dynamic map is presented. Next, we depict our collaborative mapping framework for a team of vehicles running with proposed perception module on top of the next generation vehicular communication network. The framework employs distributed place recognition and pose optimization on vehicles, and centralized final map integration on cloud servers. Lastly, two experiments from real-world datasets are showcased to demonstrate the benefits of unified perception and collaborative mapping.

SPIE DCS 2023

Millimeter Wave Radar-based Road Segmentation (spie)

Mark Southcott, Leo Zhang and Chen Liu

SPIE 12535, Radar Sensor Technology XXVII, 125350Q (14 June 2023)

Abstract: Research into autonomous vehicles has focused on purpose-built vehicles with Lidar, camera, and radar systems. Many vehicles on the road today have sensors built into them to provide advanced driver assistance systems. In this paper we assess the ability of low-end automotive radar coupled with lightweight algorithms to perform scene segmentation. Results from a variety of scenes demonstrate the viability of this approach that complement existing autonomous driving systems.

IROS 2021

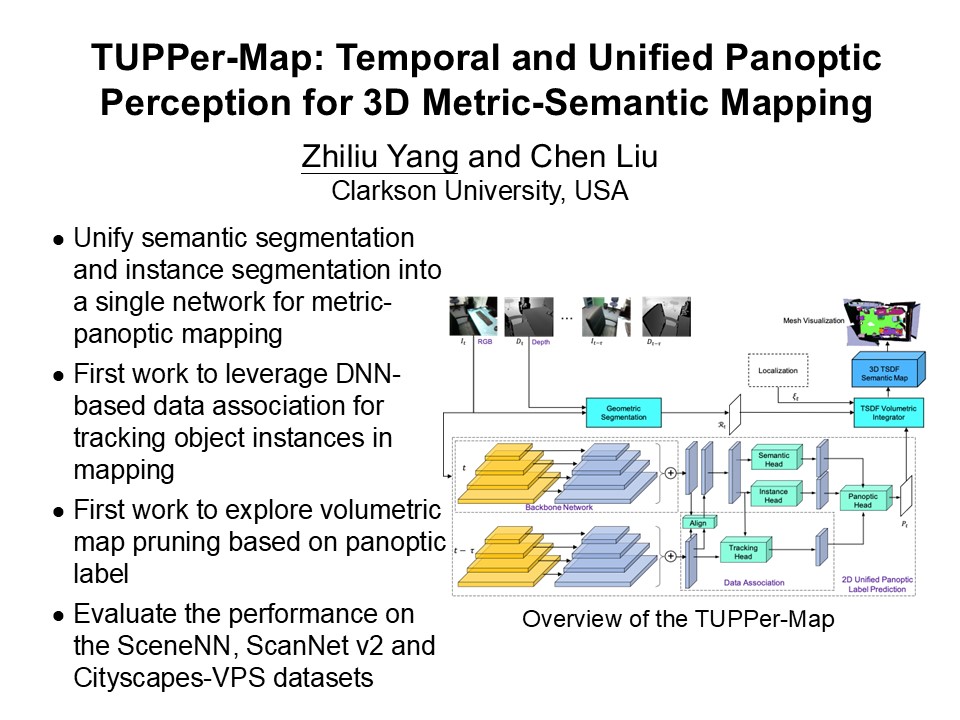

TUPPer-Map: Temporal and Unified Panoptic Perception for 3D Metric-Semantic Mapping

Zhiliu Yang and Chen Liu

The IEEE/RJS International Conference on Intelligent Robots and Systems (IROS 2021), September 27 - October 1, 2021, Praque, Czech Republic

Abstract: In this paper, we propose TUPPer-Map, a metric-semantic mapping framework based on the unified panoptic segmentation and temporal data association. In contrast to the previous mapping method, our framework integrates the data association stage into the holistic pixel-level segmentation stage in an end-to-end fashion, taking advantage of both intra-frame and inter-frame spatial and temporal knowledge. Firstly, we unify two-branch instance segmentation network and semantic segmentation network into a single network by sharing the backbone net, maximizing the 2D panoptic segmentation performance. Next, we leverage geometric segmentation to refine the segments predicted via deep learning. Then, we design a novel deep learning based data association module to track the object instances across different frames. Optical flow of consecutive frames and alignment of ROI (Region of Interest) candidates are learned to predict the frame-consistent instance label. At last, 2D semantics are integrated into 3D volume by TSDF raycasting to build the final map. We evaluated the performance of our framework extensively over the SceneNN, ScanNet v2 and Cityscapes-VPS datasets. Our experimental results demonstrate the superiority of TUPPer-Map over existing semantic mapping methods. Overall, our work illustrates that using learning based data association strategy can enable a more unified perception network for 3D mapping.

Demo Video

Digest

pi-Map: A Decision-Based Sensor Fusion with Global Optimization for Indoor Mapping (ieee)

Zhiliu Yang, Bo Yu, Wei Hu, Jie Tang, Shaoshan Liu, and Chen Liu

The IEEE/RSJ International Conference on Intelligent Robots and Systems ( IROS 2020), October 25-29, 2020, Las Vegas, NV, USA

Abstract: In this paper, we propose pi-map, a tightly coupled fusion mechanism that dynamically consumes LiDAR and sonar data to generate reliable and scalable indoor maps for autonomous robot navigation. The key novelty of pi-map over previous attempts is the utilization of a fusion mechanism that works in three stages: the first LiDAR scan matching stage efficiently generates initial key localization poses; the second optimization stage is used to eliminate errors accumulated from the previous stage and guarantees that accurate large-scale maps can be generated; then the final revisit scan fusion stage effectively fuses the LiDAR map and the sonar map to generate an highly accurate representation of the indoor environment. We evaluate pi-map on both large and small environments and verify its superiority over existing fusion methods.

Demo Video

MetroCAD 2020

2D Map Estimation via Teacher-Forcing Unsupervised Learning (ieee)

Zhiliu Yang and Chen Liu

The Third IEEE International Conference on Connected and Autonomous Driving (MetroCAD 2020), Detroit USA, February 27-28, 2020

Abstract: Existing global optimization mapping is prone to time-consuming parameter fine tuning of scan matching. In this work, we propose a novel map estimation method via cascading scan-to-map local matching with Deep Neural Network (DNN). Scan-to-map local matching firstly acts as a teacher to provide a coarse pose estimation, then a DNN is trained in an unsupervised learning fashion by exploiting the self-contradictory occupancy status of the point clouds. On the other hand, in order to cope with the mismatch problem caused by variable point number of a scan and fixed input size of DNN, a data hiding strategy is proposed. Experiments are conducted on three LiDAR datasets we collected from real-world scenarios. The visualization results of final maps demonstrate that our method, teacher-forcing unsupervised learning, is able to produce 2D occupancy map very close to the real world, which outperforms pure DeepMapping as well as ICP-warm-started DeepMapping. We further demonstrated that our results are comparable with those from traditional Bundle Adjustment (BA) method, without the need for parameter fine tuning.

MetroCAD 2020

An Autoencoder Based Approach to Defend Against Adversarial Attacks for Autonomous Vehicles (ieee)

Houchao Gan and Chen Liu

The Third IEEE International Conference on Connected and Autonomous Driving (MetroCAD 2020) Detroit USA, February 27-28, 2020

Abstract: Boosted by the evolution of machine learning technology, large amount of data and advanced computing system, neural networks have achieved state-of-the-art performance that even exceeds human capability in many applications. However, adversarial attacks targeting neural networks have demonstrated detrimental impact in autonomous driving. The adversarial attacks are capable of arbitrarily manipulating the neural network classification results with different input data which is non-perceivable to human.

COMPSAC 2018

Teaching Autonomous Driving Using a Modular and Integrated Approach (ieee)

Jie Tang, Shaoshan Liu, Songwen Pei, Stephane Zuckerman, Chen Liu, Weisong Shi, and Jean-Luc Gaudiot

2018 IEEE 42nd Annual Computer Software & Applications Conference (COMPSAC), Tokyo, Japan, July 23-27, 2018, pp.361-366

Introduction: Teaching autonomous driving is a challenging task. Indeed, most existing autonomous driving teaching activities focus on a few of the technologies involved. This not only fails to provide a comprehensive coverage, but also sets a high entry barrier for students with different backgrounds. Objective: The primary objective of this study is to present a modular, integrated approach towards teaching autonomous driving. Methods: We organize the technologies used in autonomous driving into modules. This is described in the textbook we have developed as well as a series of multimedia online lectures designed to provide technical overview for each module. Once the students have understood these modules, the experimental platforms for integration we have developed allow the students to fully understand how the modules interact with each other. Results: To verify this teaching approach, we present three case studies: an introductory class on autonomous driving for students with only a basic technology background; a new session in an existing embedded systems class to demonstrate how embedded system technologies can be applied towards autonomous driving; and an industry professional training session to quickly bring up experienced engineers to work in autonomous driving. The results show that students can maintain a high interest level and make great progress by starting with familiar concepts before moving onto other modules. Conclusions: Autonomous driving is not one single technology, but rather a complex system integrating many technologies. Our modular and integrated approach is an effective method in teaching autonomous driving.

IOV 2017

Distributed Simulation Platform for Autonomous Driving (springer)

Jie Tang, Shaoshan Liu, Chao Wang and Chen Liu

The 2017 International Conference on Internet of Vehicles (IOV 2017), Kanazawa, Japan, November 22-25, 2017

Abstract: Autonomous vehicle safety and reliability are the paramount requirements when developing autonomous vehicles. These requirements are guaranteed by massive functional and performance tests. Conducting these tests on real vehicles is extremely expensive and time consuming, and thus it is imperative to develop a simulation platform to perform these tasks. For simulation, we can utilize the Robot Operating System (ROS) for data playback to test newly developed algorithms. However, due to the massive amount of simulation data, performing simulation on single machines is not practical. Hence, a high-performance distributed simulation platform is a critical piece in autonomous driving development. In this paper we present our experiences of building a production distributed autonomous driving simulation platform. This platform is built upon Spark distributed framework, for distributed computing management, and ROS, for data playback simulations.

Senior Design Project:

Autonomous Roaming Robot using LiDAR (ARRL)

EE 416/464 Computer Engineering Senior Lab, Fall 2020

Team: Joshua Frank, Evan Perry, Evan Nguyen, Rod Izadi

Final Poster

IEEE

The IEEE Computer Society Special Technical Community (STC) on Autonomous Driving Technologies is an open online community and forum striving to explore innovative solutions to open problems in the area of autonomous driving. The STC serves those who have an interest in autonomous driving which involves the integration from different technology areas including intelligent sensors, machine perception, machine localization, planning and control, operating systems, high performance embedded systems, as well as cloud computing. Dr. Chen Liu served as chair of STC of Autonomous Driving Technologies from 2018 to 2020.